Hi everyone,

I’ve been going through the positional encoding part of the Transformer lectures. The explanation given was that using only sin can cause ambiguity since, for example, sin(0°) = sin(180°).

For d dimension, sin(pos / 10000^(2i/d)), if I check across positions (e.g., pos=0 ,pos=180), I do see different values because of the scaling factor “pos”

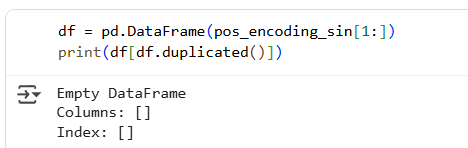

I even tried generating a dataframe of positional encodings using only sin , and all the rows for different positions turned out unique.

So my question is:

-

If

sin-only encodings are already unique across positions (at least within practical sequence lengths), what additional benefit do we get by addingcos? -

Is the main reason mathematical stability?

Would love if someone can clarify why both functions are necessary when sin alone seems to work without collisions.